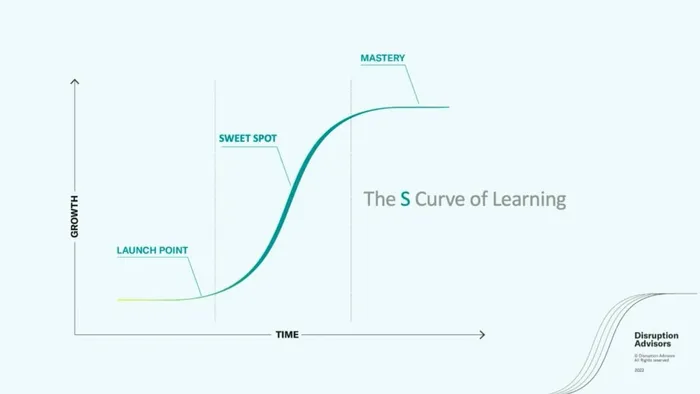

AI is starting to hit the sweet spot, the part on the curve when it accelerates exponentially.

Image: Supplied

Gautam Mukunda

One of the most compelling theories about the future of artificial intelligence comes, oddly enough, from a 161-year-old paper about the coal industry. In 1865, the English economist William Stanley Jevons observed that improvements to the coal-fired steam engine had not reduced Britain’s coal consumption. Instead, they had massively increased it. Improved efficiency lowered the cost of steam power, which created so many new uses and users that total consumption skyrocketed. This became known as the Jevons Paradox.

Microsoft CEO Satya Nadella invoked it after DeepSeek launched its low-cost AI model early last year, and the numbers bear him out. According to Epoch AI, the price of running a large language model at a given performance level has been dropping at a median rate of 50x per year. And yet OpenAI’s annualized revenue went from $2 billion in 2023 to more than $20 billion in 2025, while its computing capacity tripled in a single year. Costs are collapsing. Spending is exploding. This is the Jevons Paradox in action.

Most efficiency improvements don’t trigger this kind of explosion. LED bulbs became radically more efficient, for example, but there are only so many rooms to light. Internal combustion engines improved steadily for decades, but people don’t drive twice as far just because their car uses less gas.

AI is different. The question isn’t whether the Jevons Paradox applies - it’s when.

The answer has to do with how technologies spread. As McKinsey’s Richard Foster showed in his 1986 book Innovation: The Attacker’s Advantage, technologies progress not in straight diagonal lines upward, but in S-curves: slow adoption at the bottom, explosive growth in the middle, saturation at the top. Sail gave way to steam. Vacuum tubes gave way to transistors, which gave way to semiconductors. Each followed the same pattern.

The critical moment is the inflection point where the S starts to go vertical. Call it the hidden threshold. Below it, the technology works, but it is limited to elites. Only the people with enough money, technical skill, or institutional access can use it, and they kludge their way in. Hedge funds were paying for custom natural language processing tools years before ChatGPT existed. Software had access to GPT-3starting in 2020. The technology was both powerful and restricted.

When well-resourced insiders are spending real money to get access to something, that’s a tell. Demand is lurking, waiting for the technology to become accessible enough.

ChatGPT was not a dramatic leap in raw capability over GPT-3. It was a chat interface layered on top of a modestly upgraded model. But it was good enough, accessible enough and cheap enough that millions of people who had been locked out could suddenly use it. It crossed the hidden threshold. Demand went from flat to vertical: from 100 million users about a year after its November 2022 release to 900 million weekly active users today. And here is the part that matters most for understanding what happened: OpenAI’s own engineers were surprised by the reception. The people who built the product did not see the demand coming.

Below the hidden threshold, improvements are real, but the user base is too small and specialized for the Jevons Paradox to kick in. Above it, the dam breaks. A product stops being an elite pursuit and starts being something everyone can use.

But remember that this is a S-curve: The vertical part does not go on forever. The jet engine crossed a hidden threshold in the late 1950s, for example, when it made air travel fast and affordable enough for the masses. For the next two decades, global air passenger traffic grew at 14% a year. Today, with planes flying barely faster than they did in 1958, that growth rate has settled to a fraction of that.

AI will hit a ceiling too, and for reasons more fundamental than regulation or energy. Intelligence gives people only so much leverage on many problems, even seemingly simple ones. The physicist Michael Berry has calculated that predicting the ninth collision of a billiard ball requires accounting for the gravitational pull of spectators, and that by the 56th you would need to know the position of every particle in the observable universe. If pool tables are that intractable, consider financial markets - where, as George Soros has argued, the predictions themselves change the behavior of the participants, creating a reflexivity that no amount of intelligence can untangle.

It is tempting to believe that AI is the ultimate revolution because we are in the middle of it. But every technology that ever crossed a hidden threshold felt unique to the people living through it. The S-curve for AI will eventually flatten, because all S-curves do. And when it does, the next revolution will not be more AI. It will be something else, something currently sitting on the flat bottom of its own S-curve, where adoption looks negligible and the technology looks like a niche curiosity. Its builders will be looking at moderate projections and assuming that they understand their market.

They won’t. The demand will be latent below a threshold that, when crossed, will make their forecasts look like a rounding error. They will not see it coming. The people building the next revolution never do.